Tutorial

This tutorial covers the well known usage of BDC-Catalog data management integrating with Python

SQLAlchemy.

Its applicable to users who want to learn how to use Brazil Data Cube Catalog module in runtime and

for users that have existing applications or related learning material for BDC-Catalog.

Requirement

For this tutorial, we will use an instance of PostgreSQL with PostGIS support

SQLALCHEMY_DATABASE_URI=postgresql://postgres:postgres@localhost/bdc

You can set up a minimal PostgreSQL instance with Docker with the following command:

docker run --name bdc_pg \

--detach \

--volume bdc_catalog_vol:/var/lib/postgresql/data \

--env POSTGRES_PASSWORD=postgres \

--publish 5432:5432 \

postgis/postgis:12-3.0

Note

You may, optionally, skip this step if you have already a running PostgreSQL with PostGIS supported.

Note

Consider to have a minimal module init as mentioned in Usage - Development. You may use the following statement to initialize through Python context:

from bdc_catalog import BDCCatalog

from flask import Flask

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

# Any operation related database here

pass

Since the BDC-Catalog were designed to work with Flask API framework, remember to always use it while app.app_context() as above.

BDC-Catalog & STAC Spec

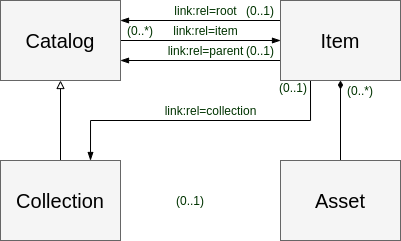

The BDC-Catalog was proposed to implement the STAC Spec.

The STAC Spec is a way to standardize the geospatial assets metadata as catalog along the service applications.

With the database model BDC-Catalog, the Brazil Data Cube implements the STAC API Spec in the module

BDC-STAC. Currently, the BDC-STAC is supporting the STAC API 1.0.

The diagram depicted in the figure below contains the most important concepts behind the STAC data model:

The description of the concepts below are adapted from the STAC Specification:

Item: a STAC Item is the atomic unit of metadata in STAC, providing links to the actual assets (including thumbnails) that they represent. It is a GeoJSON Feature with additional fields for things like time, links to related entities and mainly to the assets. According to the specification, this is the atomic unit that describes the data to be discovered in a STAC Catalog or Collection.Asset: a spatiotemporal asset is any file that represents information about the earth captured in a certain space and time.Catalog: provides a structure to link various STAC Items together or even to other STAC Catalogs or Collections.Collection: is a specialization of the Catalog that allows additional information about a spatiotemporal collection of data.

Collection Management

The model bdc_catalog.models.Collection follows the signature of STAC Collection Spec.

A collection is a specialization of the Catalog that allows additional information about a spatiotemporal collection of data.

In bdc_catalog.models.Collection, we have the fields as described below:

title: A short descriptive title for the Collection.name: A short name representation for the Collection. This value act as Unique value when combined withversionwith following signature:name-version.description: A detailed description to fully explain the collection.version: Version definition. This value act as Unique value when combined withnamewith following signature:name-version.properties: A map of collection properties like sensor, platform, etc. This field is combined and shown in STAC Server.is_available: Flag to determine the Collection availability. When value isFalsemeans that collection should not be shown inCatalog.keywords: List of keywords describing the collection. This field is used in STAC Server.collection_type: Enum type to describe the collection. The supported values are:collection,cube,classificationandmosaic.category: Enum type to identify collection kind. The supported values are:eofor Electro-Optical sensorssarfor Synthetic-Aperture Radar sensors,lidarfor LiDAR imageryunknownfor others datasets.

temporal_composition_schema: A structure representing the temporal step which the collection were built. This field is OPTIONAL. Follows the BDC Temporal Compositing.composite_function_id: The Temporal Compositing function used to generate a data cube. This field is OPTIONAL.

Create Collection

As mentioned in the section Collection Management, the model bdc_catalog.models.Collection requires a few fields to be

filled out. A minimal way to create collection is:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection

from flask import Flask

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

collection = Collection()

collection.name = 'S2_L1C'

collection.version = '1'

collection.title = 'Sentinel-2 - MSI - Level-1C'

collection.properties = {

"platform": "sentinel-2",

"instruments": [

"MSI"

]

}

collection.category = 'eo'

collection.collection_type = 'collection'

collection.keywords = ["eo", "sentinel", "msi"]

collection.is_available = True

collection.save()

You can also create a collection using a minimal func helper named bdc_catalog.utils.create_collection():

from bdc_catalog.utils import create_collection

create_collection(name='S2_L1C', version='1', title='Sentinel-2 - MSI - Level-1C', **parameters)

Note

Optionally, you can load a Collection using a minimal command line from bdc_catalog.cli.load_data():

bdc-catalog load-data --ifile examples/fixtures/sentinel-2.json

Create Band

The model bdc_catalog.models.Band aggregates the collection,

Optionally, you may set extra metadata for a band using bdc_catalog.models.MimeType and bdc_catalog.models.ResolutionUnit.

The model bdc_catalog.models.MimeType deals with supported content types for bdc_catalog.models.Band

and indicates the nature and format of assets.

from bdc_catalog import BDCCatalog

from bdc_catalog.models import MimeType

from flask import Flask

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

mimetypes = [

'image/png',

'image/tiff', 'image/tiff; application=geotiff',

'image/tiff; application=geotiff; profile=cloud-optimized',

'text/plain',

'text/html',

'application/json',

'application/geo+json',

'application/x-tar',

'application/gzip'

]

for mimetype in mimetypes:

mime = MimeType(name=mimetype)

mime.save()

The model bdc_catalog.models.ResolutionUnit specifies the unit spatial resolution for bdc_catalog.models.Band.

So it can be represented as: Meter (m), degree, centimeters (cm), etc. The following snippet is used to create a new

resolution unit.

from bdc_catalog.models import ResolutionUnit

resolutions = [

('Meter', 'm'),

('Centimeter', 'cm'),

('Degree', '°')

]

for name, symbol in resolutions:

res = ResolutionUnit()

res.name = name

res.symbol = symbol

res.save()

Access Collections

In order to search for Collections, please, take a look in the next query. To retrieve all collections from database use:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, db

from flask import Flask

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

# List all collections

collections = (

Collection.query()

.all()

)

print(f"Collections: {','.join([c.identifier for c in collections])}")

You can increment the query and restrict to show only available collections:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, db

from flask import Flask

with app.app_context():

# List only available collections

collections = (

Collection.query()

.filter(Collection.is_available.is_(True))

.all()

)

print(f"Available Collections: {','.join([c.identifier for c in collections])}")

A collection, essentially, has a few unique keys. Its defined by both id and Name-Version.

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, db

from flask import Flask

with app.app_context():

# Retrieve a collection by identifier

collection = Collection.get_by_id("S2_L1C-1") # or Collection.get_by_id(Id)

print(f"Collection: {collection.identifier}")

# Or verbose way

collection = (

Collection.query()

.filter(Collection.identifier == "S2_L1C-1") # or Collection.id == Id

.first()

)

For spatial query constraints, consider to use special SQLAlchemy func to generate SQL expressions in runtime. In this case, we are going to use ST_Intersects:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, db

from flask import Flask

with app.app_context():

roi = box(-54, -12, -53, -11)

collections = (

Collection.query()

.filter(func.ST_Intersects(Collection.spatial_extent, geom_to_wkb(roi)),

Collection.start_date >= '2022-01-01')

.all()

)

print(f"Collections Filter ({roi.wkt}): {','.join([c.identifier for c in collections])}")

Note

Remember that property bdc_catalog.models.Collection.spatial_extent are updated whenever an item is inserted in database using SQL Triggers.

Update collection

The process to update any collection is quite simple. With support of SQLAlchemy, there a few ways to update a collection, which are:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, db

from flask import Flask

with app.app_context():

# Retrieve a collection by identifier

collection = Collection.get_by_id("S2_L1C-1") # or Collection.get_by_id(Id)

# Update collection

collection.title = 'Sentinel-2 - Level-1C'

collection.save()

# Alternative ways to update

(

db.session.query(Collection)

.filter(Collection.identifier == "S2_L1C-1")

.update({"title": 'Sentinel-2 - Level-1C'}, synchronize_session="fetch")

)

db.session.commit()

If you would like to update several collections once, you have to adapt the query and then update in cascade as following:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, db

from flask import Flask

with app.app_context():

# Mark all EO collections as available.

statement = (

update(Collection)

.where(Collection.category == 'eo')

.values(is_available=True)

)

db.session.execute(statement)

db.session.commit()

Item Management

The model bdc_catalog.models.Item follows the assignature of STAC Spec definition.

According to STAC Spec Item, an item

represents an atomic collection of inseparable data and metadata, which its geo-located feature using GeoJSON Spec with additional

fields for things like time, links to related entities and mainly to the assets.

The STAC Item has Assets which file that represents information about the earth captured in a certain space and time.

In bdc_catalog.models.Item, we have the fields as described below:

cloud_cover: Field describing the cloud cover factor. It will be transpiled aseo:cloud_coverinSTAC Item properties.is_available: Flag to determine the Item availability. When value isFalsemeans that item should not be shown inCatalog.tile_id: Tile identifier relationship of Item.metadata: The metadata related with Item. All properties inside this field acts likeSTAC Item properties.provider_id: Item origin. Follows the STAC Provider Object.footprint: Item footprint geometry. It consists in aGeometry(Polygon, 4326). As others modern GIS applications, we recommend thatfootprintshould be simplified geometry.bbox: Item footprint bounding box. It consists in aGeometry(Polygon, 4326).

Create Item

Note

This is just an example of how to publish an item in BDC-Catalog. You must need to have the directory containing the mentioned files to execute the example properly.

Consider you have a directory named S2A_MSIL1C_20210527T150721_N0300_R082_T19LBL_20210527T183627

containing a set of files to publish:

S2A_MSIL1C_20210527T150721_N0300_R082_T19LBL_20210527T183627:B02.tifB03.tifB04.tifthumbnail.png

You can register this item as following:

import shapely.geometry

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, Item

# We recommend to import bdc_catalog.utils.geom_to_wkb to transform shapely GEOM to WKB

from bdc_catalog.utils import geom_to_wkb

from flask import Flask

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

collection = Collection.get_by_id('S2_L1C-1')

name = "S2A_MSIL1C_20210527T150721_N0300_R082_T19LBL_20210527T183627"

geometry: shapely.geometry.Polygon = shapely.geometry.shape({

"type": "Polygon",

"coordinates": [[[-70.731002, -9.131078],

[-70.726482, -8.138363],

[-71.722423, -8.132908],

[-71.729545, -9.124947],

[-70.731002, -9.131078]]]

})

# Let's create a new Item definition

item = Item(collection_id=collection.id, name=name)

item.cloud_cover = 0

item.start_date = item.end_date = "2021-05-27T15:07:21"

item.footprint = geom_to_wkb(geometry, srid=4326)

item.bbox = geom_to_wkb(geometry.envelope, srid=4326)

item.is_available = True

for band in ['B02.tif', 'B03.tif', 'B04.tif', 'thumbnail.png']:

item.add_asset(name=band.split('.')[0], # Use only name as asset key

file=f"S2A_MSIL1C_20210527T150721_N0300_R082_T19LBL_20210527T183627/{band}",

role=["data"],

href=f"/s2-l1c/19/L/BL/2021/S2A_MSIL1C_20210527T150721_1/{band}")

item.save()

Note

We strongly recommend you to use bdc_catalog.utils.geom_to_wkb() to convert geometries into WKB instead WKT to avoid floating precision errors.

Note

We also suggest you to keep the footprint as simplified geometries. It optimizes the queries while searching for spatial areas in PostgreSQL/PostGIS.

Access Items

In order to search for Items, please, take a look in the simple query.

Consider you have a collection instance object. To retrieve all items from the given collection,

use as following:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, Item, Tile, db

from flask import Flask

from shapely.geometry import box

from sqlalchemy import func

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

# Use collection as reference

collection = Collection.get_by_id('S2_L1C-1')

items = (

Item.query()

.filter(Item.collection_id == collection.id)

.all()

You can also increment the query, delimiting restriction of cloud_cover less than 50% (only available items):

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, Item, Tile, db

from flask import Flask

from shapely.geometry import box

from sqlalchemy import func

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

# Use collection as reference

collection = Collection.get_by_id('S2_L1C-1')

items = (

Item.query()

.filter(Item.collection_id == collection.id,

Item.cloud_cover <= 50,

Item.is_available.is_(True))

.order_by(Item.start_date.desc())

.all()

)

print(f'Items (cloud <= 50%): {[i.name for i in items[:10]]}')

Since the BDC-Catalog integrates with SQLAlchemy ORM, you can join relationship tables and then query by bdc_catalog.models.Tile for example:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, Item, Tile, db

from flask import Flask

from shapely.geometry import box

from sqlalchemy import func

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

# Use collection as reference

collection = Collection.get_by_id('S2_L1C-1')

items = (

db.session.query(Item)

.join(Tile, Tile.id == Item.tile_id)

.filter(Item.collection_id == collection.id,

Tile.name == '23LLG')

.order_by(Item.start_date.desc())

.all()

)

print(f'Items (from tile): {[i.name for i in items[:10]]}')

If you would like to make a spatial query condition to filter a region of interest (ROI), use may use the following syntax:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, Item, Tile, db

from flask import Flask

from shapely.geometry import box

from sqlalchemy import func

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

# Use collection as reference

collection = Collection.get_by_id('S2_L1C-1')

roi = box(-54, -12, -53, -11)

items = (

db.session.query(Item)

.join(Tile, Tile.id == Item.tile_id)

.filter(func.ST_Intersects(Item.bbox, roi), # Intersect by ROI

Item.start_date >= '2022-01-01')

.order_by(Item.start_date.desc())

.all()

)

print(f'Items (from roi): {[i.name for i in items[:10]]}')

Note

Whenever the entry Item.query() is used, it retrieves ALL columns from bdc_catalog.models.Item.

Depending your application, you may face performance issues due total amount of affected items.

Since we are integrating with SQLAlchemy, you can specify desirable fields as following:

from bdc_catalog.models import db

items = (

db.session.query(Item.name, Item.cloud_cover, Item.assets)

.filter(Item.collection_id == collection.id,

Item.cloud_cover <= 50,

Item.is_available.is_(True))

.order_by(Item.start_date.desc())

.all()

)

for item in items:

# It injects `.name`, `.cloud_cover`, `.assets` by default in SQLAlchemy

print(item.name)

Essentially, the query structure is similar. Keep in mind that the given query retrieves only

specified fields. With this, you don’t have a reference to the bdc_catalog.models.Item.

Processor & ItemsProcessors

The model bdc_catalog.models.ItemsProcessors extends the bdc_catalog.models.Item adding support to

relate Items with bdc_catalog.models.Processor, similar in STAC Processing.

In other words, it indicates from which processing chain the bdc_catalog.models.Item originates and

how the data itself has been produced. It makes a Item traceability and search among the processing levels.

A processor can be created as following:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, Item, Processor, db

from flask import Flask

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

processor = Processor.query().filter(Processor.name == 'Sen2Cor').first()

if processor is None:

with db.session.begin_nested():

processor = Processor()

processor.name = 'Sen2Cor'

processor.facility = 'Copernicus Sentinel-2 Level 2A'

processor.level = 'L2A'

processor.version = '2.10'

processor.uri = 'https://step.esa.int/main/snap-supported-plugins/sen2cor/'

processor.save(commit=False)

db.session.commit()

To attach the item with Sen2Cor, you may use as following:

from bdc_catalog import BDCCatalog

from bdc_catalog.models import Collection, Item, Processor, db

from flask import Flask

app = Flask(__name__)

app.config['SQLALCHEMY_DATABASE_URI'] = 'postgresql://postgres:postgres@localhost:5432/bdc'

BDCCatalog(app)

with app.app_context():

processor = Processor.query().filter(Processor.name == 'Sen2Cor').first()

# Attach to an item: Find item

collection = Collection().get_by_id('S2_L1C-1')

item: Item = (

Item.query()

.filter(Item.collection_id == collection.id,

Item.name == 'S2A_MSIL1C_20210527T150721_N0300_R082_T19LBL_20210527T183627')

.first()

)

item.add_processor(processor)

item.save()

print(f'Item {item.name}:')

print(f'-> Processors: {", ".join([p.name for p in item.processors])}')

Note

Optionally, you may use the verbose way manually relating bdc_catalog.models.Item and bdc_catalog.models.Processor using bdc_catalog.models.ItemsProcessors as following:

from bdc_catalog.models import ItemsProcessors, db

with db.session.begin_nested():

item_processor = ItemsProcessors()

item_processor.item_id = item.id

item_processor.processor = processor

item_processor.save(commit=False)

db.session.commit()

After make a relationship between Item and Processor using ItemsProcessors, you can access the

relationship with command:

item.get_processors()